This is the Linux app named Intel neon whose latest release can be downloaded as OptimizedCPUperformanceonmacOSwithnewMKLMLsupport,improvedSSDCPUperformance.zip. It can be run online in the free hosting provider OnWorks for workstations.

Download and run online this app named Intel neon with OnWorks for free.

Follow these instructions in order to run this app:

- 1. Downloaded this application in your PC.

- 2. Enter in our file manager https://www.onworks.net/myfiles.php?username=XXXXX with the username that you want.

- 3. Upload this application in such filemanager.

- 4. Start the OnWorks Linux online or Windows online emulator or MACOS online emulator from this website.

- 5. From the OnWorks Linux OS you have just started, goto our file manager https://www.onworks.net/myfiles.php?username=XXXXX with the username that you want.

- 6. Download the application, install it and run it.

SCREENSHOTS

Ad

Intel neon

DESCRIPTION

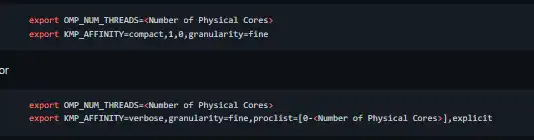

neon is Intel's reference deep learning framework committed to best performance on all hardware. Designed for ease of use and extensibility. See the new features in our latest release. We want to highlight that neon v2.0.0+ has been optimized for much better performance on CPUs by enabling Intel Math Kernel Library (MKL). The DNN (Deep Neural Networks) component of MKL that is used by neon is provided free of charge and downloaded automatically as part of the neon installation. The gpu backend is selected by default, so the above command is equivalent to if a compatible GPU resource is found on the system. The Intel Math Kernel Library takes advantages of the parallelization and vectorization capabilities of Intel Xeon and Xeon Phi systems. When hyperthreading is enabled on the system, we recommend the following KMP_AFFINITY setting to make sure parallel threads are 1:1 mapped to the available physical cores.

Features

- Tutorials and iPython notebooks to get users started with using neon for deep learning

- Support for commonly used layers: convolution, RNN, LSTM, GRU, BatchNorm, and more

- Model Zoo contains pre-trained weights and example scripts for start-of-the-art models

- VGG, Reinforcement learning, Deep Residual Networks, Image Captioning, Sentiment analysis, and more

- Swappable hardware backends

- Write code once and then deploy on CPUs, GPUs, or Nervana hardware

Programming Language

Python

Categories

This is an application that can also be fetched from https://sourceforge.net/projects/intel-neon.mirror/. It has been hosted in OnWorks in order to be run online in an easiest way from one of our free Operative Systems.