This is the Windows app named DeepSeed whose latest release can be downloaded as v0.8.3_Patchrelease.zip. It can be run online in the free hosting provider OnWorks for workstations.

Download and run online this app named DeepSeed with OnWorks for free.

Follow these instructions in order to run this app:

- 1. Downloaded this application in your PC.

- 2. Enter in our file manager https://www.onworks.net/myfiles.php?username=XXXXX with the username that you want.

- 3. Upload this application in such filemanager.

- 4. Start any OS OnWorks online emulator from this website, but better Windows online emulator.

- 5. From the OnWorks Windows OS you have just started, goto our file manager https://www.onworks.net/myfiles.php?username=XXXXX with the username that you want.

- 6. Download the application and install it.

- 7. Download Wine from your Linux distributions software repositories. Once installed, you can then double-click the app to run them with Wine. You can also try PlayOnLinux, a fancy interface over Wine that will help you install popular Windows programs and games.

Wine is a way to run Windows software on Linux, but with no Windows required. Wine is an open-source Windows compatibility layer that can run Windows programs directly on any Linux desktop. Essentially, Wine is trying to re-implement enough of Windows from scratch so that it can run all those Windows applications without actually needing Windows.

SCREENSHOTS

Ad

DeepSeed

DESCRIPTION

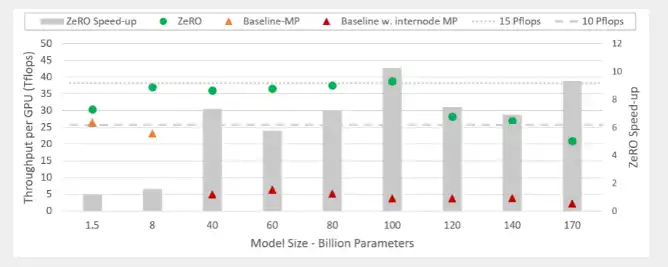

DeepSpeed is a deep learning optimization library that makes distributed training easy, efficient, and effective. DeepSpeed delivers extreme-scale model training for everyone, from data scientists training on massive supercomputers to those training on low-end clusters or even on a single GPU. Using current generation of GPU clusters with hundreds of devices, 3D parallelism of DeepSpeed can efficiently train deep learning models with trillions of parameters. With just a single GPU, ZeRO-Offload of DeepSpeed can train models with over 10B parameters, 10x bigger than the state of arts, democratizing multi-billion-parameter model training such that many deep learning scientists can explore bigger and better models. Sparse attention of DeepSpeed powers an order-of-magnitude longer input sequence and obtains up to 6x faster execution comparing with dense transformers.

Features

- 10x larger models and 10x faster training

- Minimal code change

- Extremely memory efficient

- Extremely long sequence length

- Extremely communication efficient

- An initiative to enable next-generation AI capabilities at scale

Programming Language

Python

Categories

This is an application that can also be fetched from https://sourceforge.net/projects/deepseed.mirror/. It has been hosted in OnWorks in order to be run online in an easiest way from one of our free Operative Systems.